L'IA peut écrire du code, mais ce sont les compétences qui le sécurisent.

Notre plateforme de codage sécurisé pour les entreprises permet de développer les compétences nécessaires pour sécuriser à la fois le code humain et celui généré par l'IA sans ralentir la livraison.

AI accelerates code. AI security skills must keep pace.

AI coding assistants can generate production-ready code in seconds. But speed does not equal security. AI security training helps developers identify vulnerabilities in AI-generated code, prevent prompt injection, and apply secure coding practices across modern AI workflows.

Nearly 45% of AI-generated code contains known security vulnerabilities. Securing AI-generated code starts with developer capability to identify and fix risks before code reaches production.

Build developer capability for secure AI development

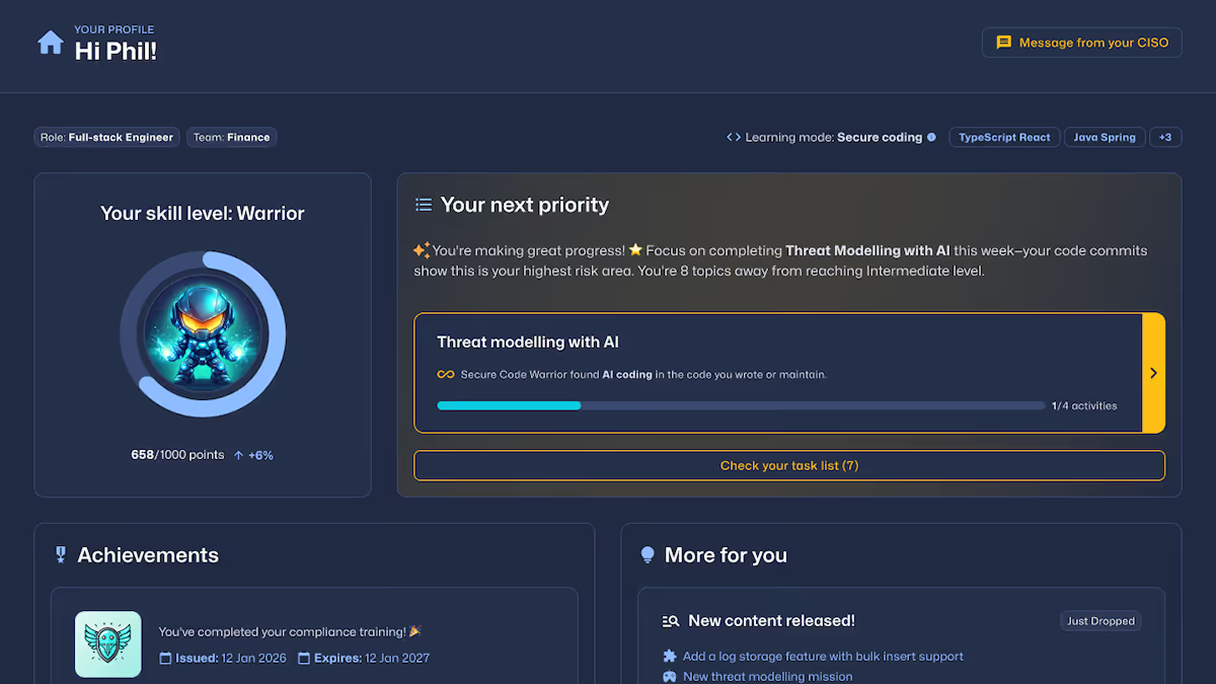

Secure Code Warrior Learning provides AI security training that builds the skills behind every commit. Developers learn to secure AI-generated code through hands-on practice across real-world AI workflows, reducing risk at the source.

Comprehensive AI security training for modern development

AI security challenges for developers

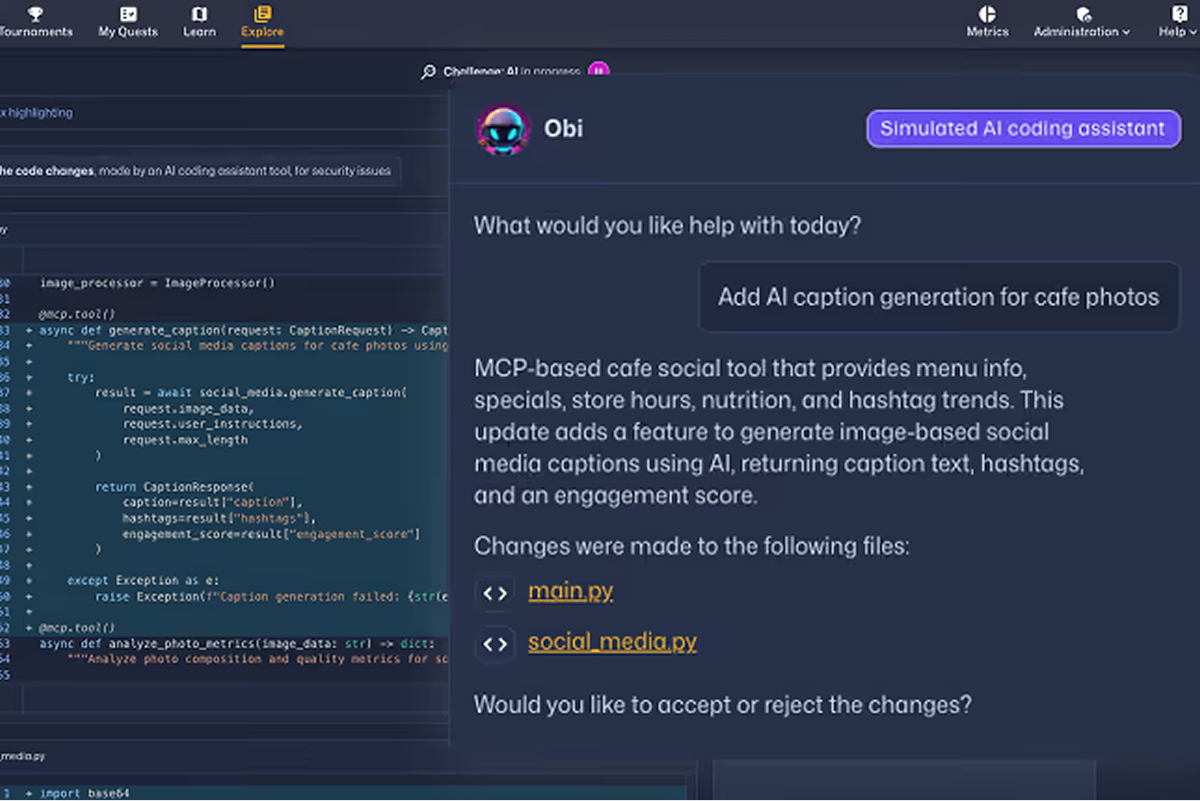

Developers learn to secure AI-generated code through interactive challenges that simulate real-world AI workflows. Learn to detect insecure patterns, validate outputs, and prevent vulnerabilities in a safe, controlled environment.

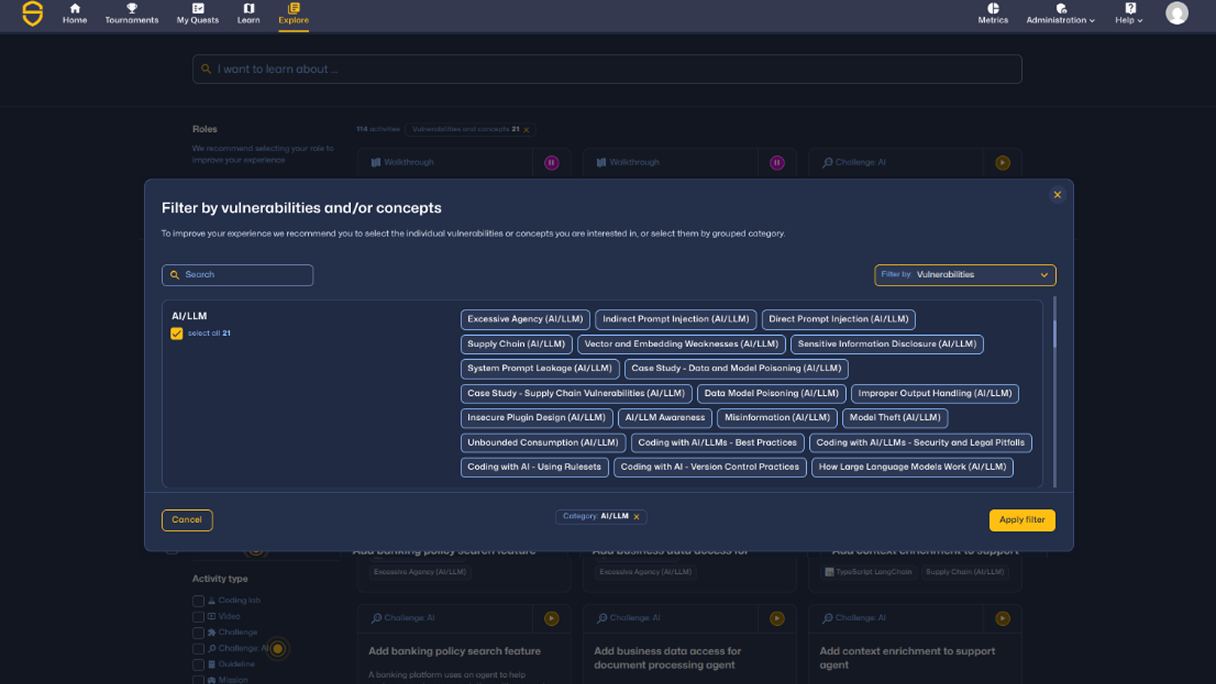

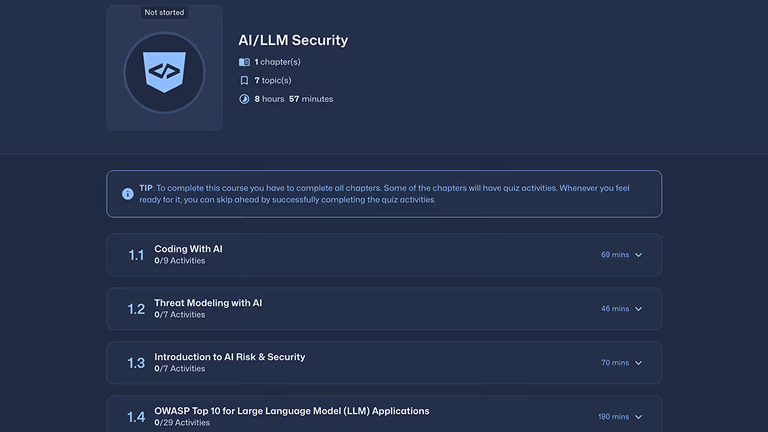

AI and LLM vulnerability training

Learning covers emerging AI vulnerabilities including prompt injection, excessive agency, system prompt leakage, sensitive data exposure, and vector and embedding weaknesses.

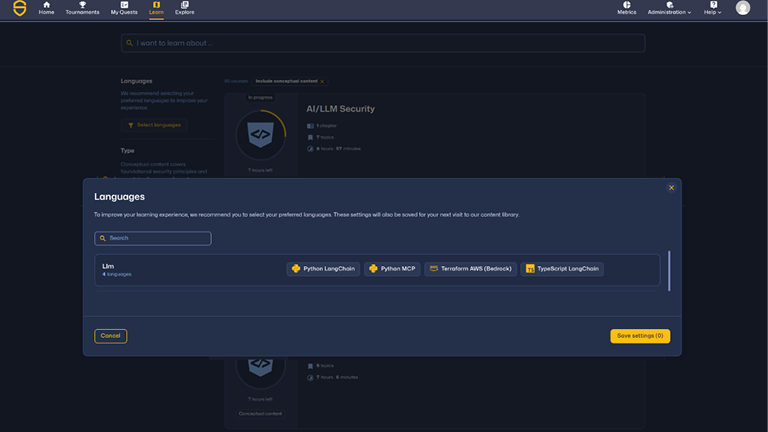

Modern AI frameworks and environments

Developers train across production AI technologies including Python (LangChain, MCP), Terraform (AWS Bedrock), and modern backend frameworks powering AI applications.

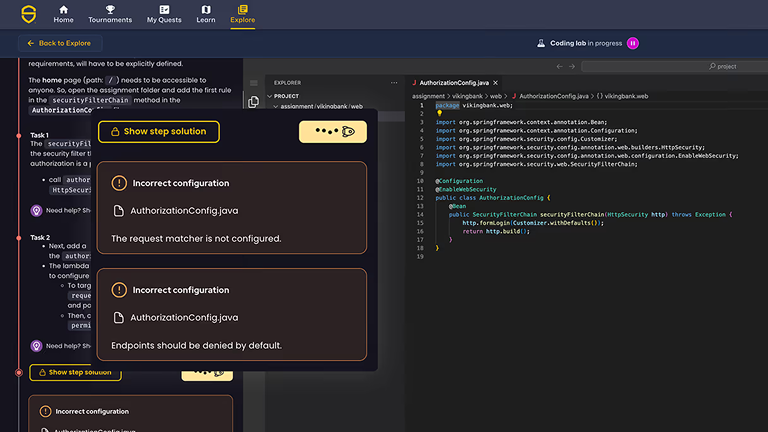

LLM missions and coding labs

Developers build capability through immersive Missions and hands-on Coding Labs that simulate real-world AI security scenarios and vulnerability exploitation patterns.

AI security concepts and design patterns

Developers learn how to securely use AI through topics like AI risk and security, threat modeling with AI, OWASP Top 10 for LLMs, and AI agent protocols (MCP, A2A, ACP).

Practice securing AI-generated code in real and simulated development workflows

Hands-on AI security training across guided and real-world scenarios. Developers build AI secure coding capability through Quests, Coding Labs, AI Challenges, and Missions.

Quêtes

Laboratoires de codage

Les défis de l'IA

Check out the SCW Learning Content Guide which outlines the breadth and depth of training available across the Secure Code Warrior platform, including secure coding vulnerabilities, AI security topics, programming languages, frameworks, and role-based learning paths.

Missions

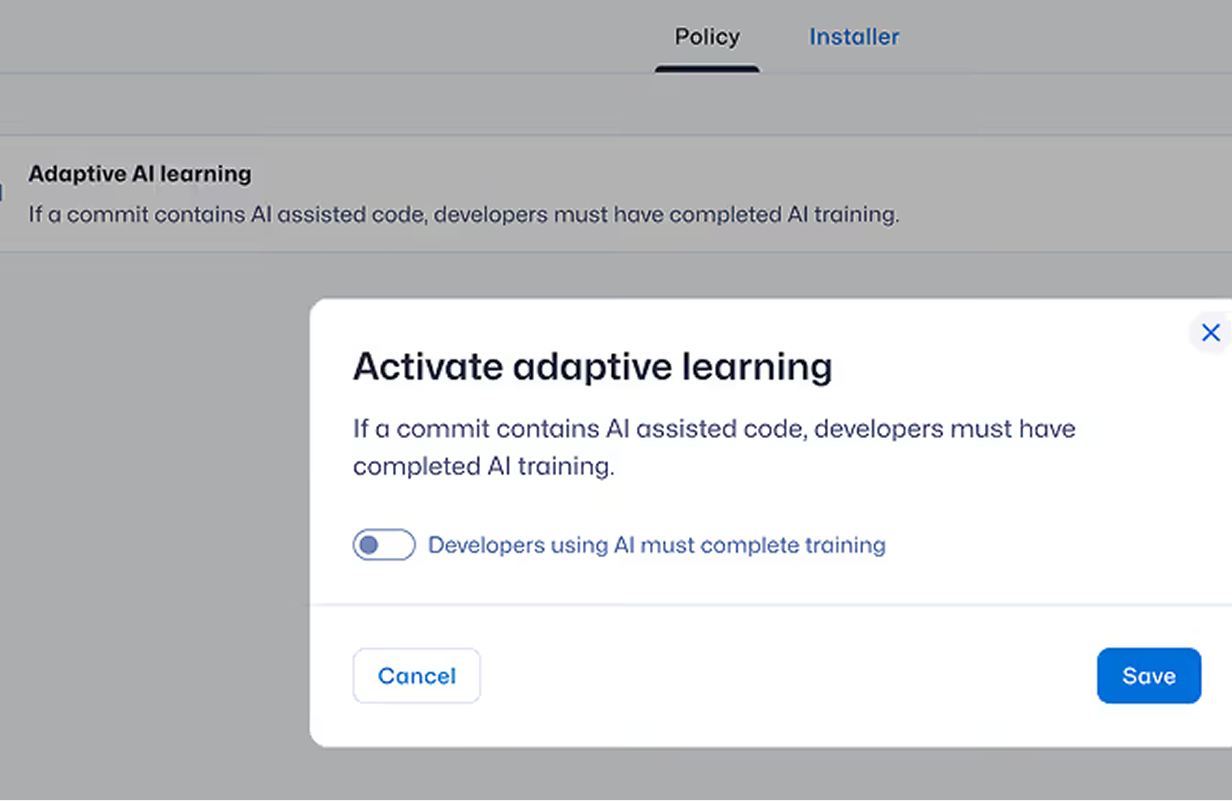

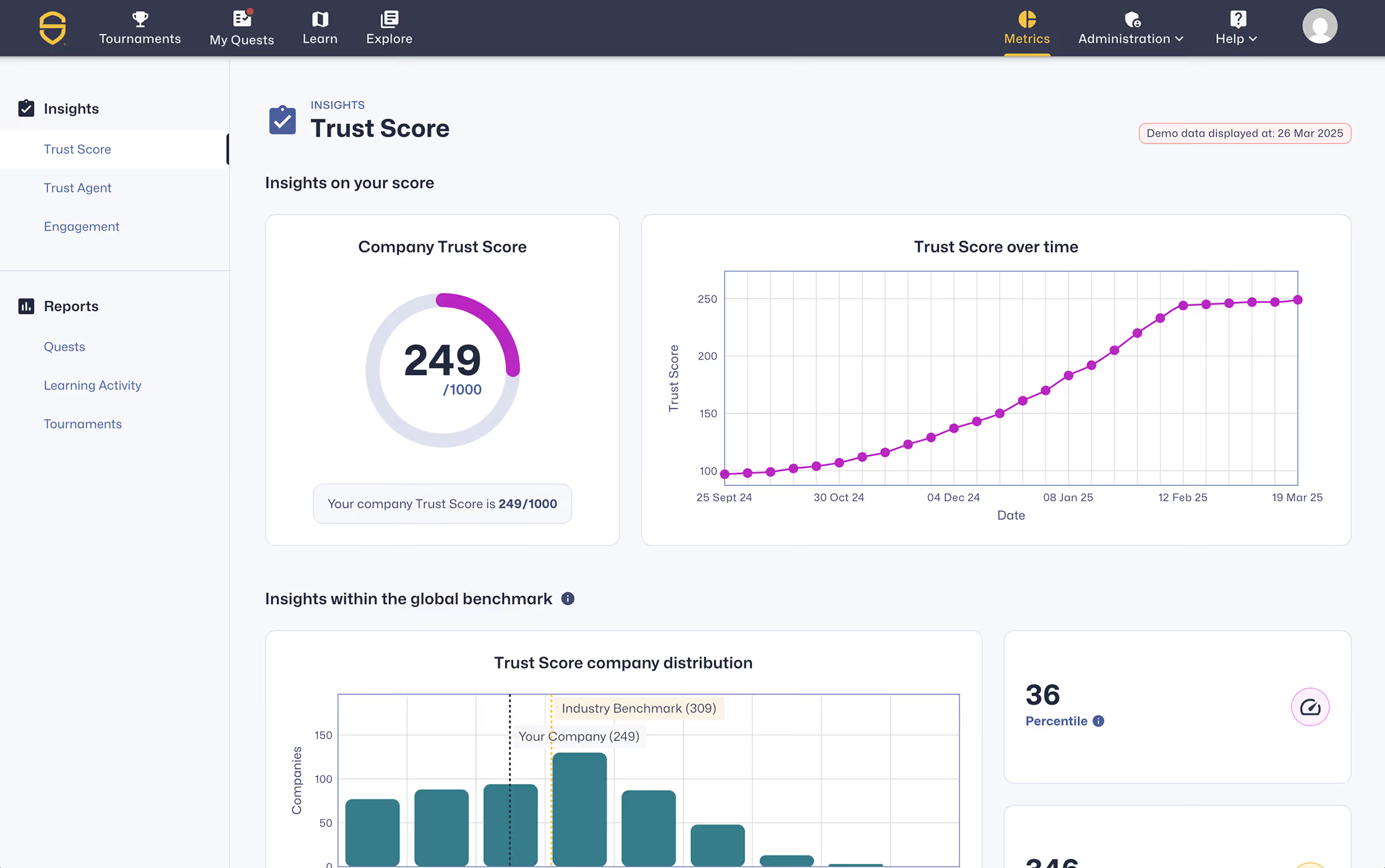

Reduce AI-driven risk at the source of code creation through developer training

Secure Code Warrior delivers AI security training that builds developer capability to identify and prevent vulnerabilities in both human-written and AI-generated code. Through hands-on learning and real-world AI security scenarios, organizations reduce recurring vulnerabilities, strengthen secure coding behavior, and demonstrate measurable improvement across modern development workflows.

ation pour remédier aux problèmes

activities

What developers learn in AI security training

Coverage spans LLM vulnerabilities, agent protocols, infrastructure security, and foundational AI security design — mapped to real developer workflows.

Practice real-world AI and LLM security risks.

AI security training teaches developers how to identify, prevent, and remediate vulnerabilities in AI-generated code and modern AI systems, including:

Build foundational AI security knowledge

Developers learn how to securely design and review AI systems through:

Secure AI agents, protocols, and cloud AI environments

Understand and mitigate risks across agent-based systems and AI infrastructure, including MCP and cloud AI services:

Secure AI services and model integrations

Model Context Protocol — Secure AI agents and protocol interactions

Security, engineering, and learning leaders responsible for secure development

Support secure AI development with role-specific capabilities tailored to your organization’s needs.

Secure AI-generated code starts with trained developers

Renforcez les compétences en matière de codage sécurisé, réduisez les vulnérabilités introduites et instaurez une confiance mesurable envers les développeurs au sein de votre organisation.

Secure AI-assisted development starts with developer capability

Learn how Secure Code Warrior helps teams adopt AI safely, reduce risk, and build measurable developer capability.